When the first Pixel was launched it had an exclusive piece of software called the Google Assistant built into it, and at the MWC next year, Google announced the rollout of Google Assistant to devices worldwide. Similarly, the Pixel 2 had Google Lens, and at the recent MWC Google announced that the feature would be rolled out globally for Android and iOS and would be accessible through the Google Photos app. Google has announced that certain devices from manufacturers like Samsung, Motorola, LG and HMD Global/ Nokia among others would be able to access the feature from the Google Assistant itself.

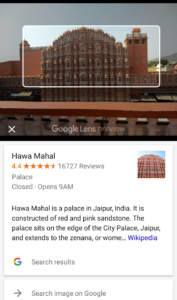

Google Lens was announced by the company the company at the Google I/O 2017 and if  put in simple words is a virtual search engine. The app uses some heavyweight algorithms to scan the images, or videos or even live feed and provide contextual information. Like point to a business card, and the details would be automatically saved as a contact, point to a book to check its reviews. You can also get details about paintings in museums, information about landmarks, restaurant or hotel reviews just by pointing your camera at it. All of this, makes it sound similar to Bixby, and it is kind of.

put in simple words is a virtual search engine. The app uses some heavyweight algorithms to scan the images, or videos or even live feed and provide contextual information. Like point to a business card, and the details would be automatically saved as a contact, point to a book to check its reviews. You can also get details about paintings in museums, information about landmarks, restaurant or hotel reviews just by pointing your camera at it. All of this, makes it sound similar to Bixby, and it is kind of.

Some more cool stuff that the Lens can do is point out the breed of the cat or dog, or connect to a WiFi network just by scanning the routers settings sticker.

The feature is being rolled out in stages, and there may be a chance that you might not be able to access it at the moment for Android users. As for iOS, there is currently no release date in sight.

The feature can be found next to the delete button in the photos app, from where it can be accessed. After that, it would scan your image to provide relevant information

Rolling out today, Android users can try Google Lens to do things like create a contact from a business card or get more info about a famous landmark. To start, make sure you have the latest version of the Google Photos app for Android: https://t.co/KCChxQG6Qm

Coming soon to iOS pic.twitter.com/FmX1ipvN62— Google Photos (@googlephotos) March 5, 2018

Abhinandan Jain

19- year old tech enthusiast. Covers leaks, news, software updates related to some of the biggest and most prominent OEM's in the Android space.